Benefits and Limitations of Automated Software Testing: Literature Review and Survey

| ✅ Paper Type: Free Essay | ✅ Subject: Information Technology |

| ✅ Wordcount: 2432 words | ✅ Published: 18 May 2020 |

BENEFITS AND LIMITATIONS OF AUTOMATED SOFTWARE TESTING:

SYSTEMATIC LITERATURE REVIEW AND PRACTITIONER SURVEY

Abstract

Automatic testing enables quicker iterations, more reliable test outcomes and finally better products. Automated testing tools become increasingly frequent and easily accessible. Two contributions are made to this study. First, conduct a systemic evaluation of advantages and constraints in scholarly literature in software test automation. Secondly the practitioner conducts an investigation into the advantages and constraints of software testing automation. This research tries to close the gap by examining both the benefits of test automation and its constraints. A systematic literature review examines scholarly opinions and evaluates the opinions of professionals through an enquiry. Academic opinions are explored by a systemic literary examination, while the opinions of practitioners are evaluated by a study in which 115 software experts have obtained replies. In addition, advantages were often identified from stronger evidence sources, while constraints often stem from reports of experience.

Table of Contents

3. SYSTEMATIC LITERATURE REVIEW

List of Tables

Table 4: Survey Results for Benefits

Table 5: Survey Results For Limitations

Table 6: Overall Satisfaction with AST

List of Figures

Figure 1: Benefits of Automated Testing

Figure 2: Limitations of Automated Testing

Benefits and limitations of automated software testing: Systematic literature review and practitioner survey

Kai Petersen, Mika Mäntylä

1. INTRODUCTION

Automated tests are a sophisticated field of research from a research perspective. Automated tests are aimed at 100% automation (Bertolino 2007). This vision has not, however, been achieved in practice (Berner, Weber and Keller, 2005). Today, top businesses use automated testing to boost product service life, decrease expensive and recurring development and enhance the quality of iteration. Developers and technicians use automated tests to overcome this deficiency, decrease the time required for test creation and eventually save money. The advantages of automatic testing while manual testing is continuous at an overall reduced price are simpler to leverage than before.

This report provides a concise overview to automated software tests. The research consists of a number of parts. Section 2 contains the study objective; Section 3 provides an explanation for the literature review; Section 4 details the advantages and constraints of automated testing; Section 5 provides study findings and Section 6 includes results of the study. The references are provided in the last Section.

2. RESEARCH GOAL

The study aims at finding the question: Are the advantages and constraints of empirical studies and reports of experience found throughout the industry?

3. SYSTEMATIC LITERATURE REVIEW

The systemic literature evaluation is used to identify the benefits and limitations of AST (Kitchenham and Charters, 2007). Over the past decade, the study concentrated on the era 1999-2011 when testing instruments were developed and strengthened, thereby influencing assessment of constraints and benefits. As a search database, we have used IEEE Explore, Engineering Village (including Inspec and Compendex), Scopus, ACM and Google Scholar. A total of 24,706 papers led from the search. The following steps were taken for the choice of papers as illustrated in Table 1. To determine if reviewers are aware of the inclusion and exclusion criteria, the research choice based on titles and abstracts was carried out using a test set of 50 papers. Kappa k was calculated (a measure for the contract between the examiners (Berry and Mielke, 1988) and k is 0.605 which show an important agreement has been reached. This value was calculated. This therefore means that the criteria for inclusion / exclusion have been obviously defined.

The review was analyzed with thematic assessment and narrative summaries. Table 2 shows which proof sources have been used. Most trials are of an empirical nature, it is obvious.

|

Criteria |

No. of articles (in → out) |

|

Duplicate removal |

24706 → 19920 |

|

Title check |

19920 → 9456 |

|

Abstract check |

9456 → 1470 |

|

Introduction and conclusion check |

1470 → 227 |

|

Full-text Reading |

227 → 25 |

|

Method |

References |

No. of articles |

|

Experimental |

(Saglietti and Pinte 2010), (Al Dallal, 2009), (Tan and Edwards, 2008), ( Alshraideh, 2008), (du Bousquet and Zuanon, 1999), (Malekzadeh and Ainon, 2010), ((Leitner et al., 2007), (Kansomkeat and Rivepiboon, 2003), (Burnim and Sen, 2008) and (Choi and Lee, 2006). |

10 |

|

Industrial Case |

Haugset and Hanssen, 2008), ( Liu, 2000), ( Hao et al., 2009), (Bashir and Banuri, 2008), (Shan and Zhu, 2009), (Karhu et al., 2009) and (Wissink and Amaro, 2006). |

7 |

|

Experience |

(Perry and Rice, 2013), (Pocatilu, 2002), (Pettichord, 1999), (Fecko and Lott, 2002), (Persson and Yilmazturk, 2004) and (Fewster and Graham, 1999). |

8 |

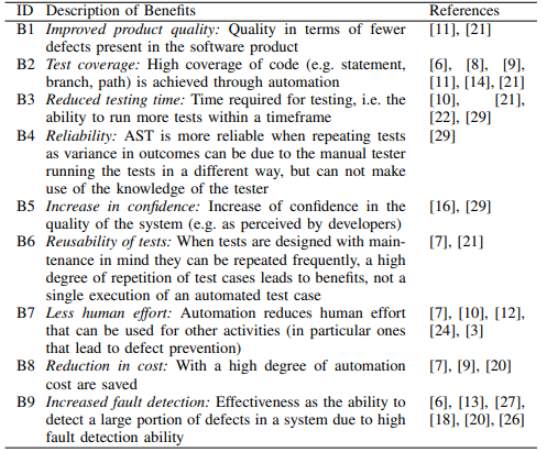

The literature review demonstrates certain advantages and restrictions of automated testing. The advantages and constraints recognized in the literature review are shown in Figure 1 and Figure 2.

Following are the benefits of automated testing:

- Enhanced service quality: quality for fewer defects in a software product

- Coverage testing: high code coverage (i.e. declaration, branch, route) is accomplished via automation

- Decreased test time: time to test, i.e. capacity to carry out more trials in a period of time

- Reliability: AST is more accurate if studies are repeated because the manual testing tester does not take advantage of the tester’s expertise.

- Increased trust: increase trust in the system quality (e.g. as designers perceive)

- Reusability of tests: when tests are intended with primary tenure in mind, they can be repeated often, there are advantages to a large level of repeat test instances, not to a single test case.

- Less human effort: Automation decreases human effort to be used in other (in specific, deficiency avoidance) operations.

- Cost reduction: saves with a high degree of automation costs

- Enhanced fault detection: efficiency, because of a strong detection capability, to detect a big part of the failures of a system.

Following are the limitation of automated testing:

- Automation can’t fully replace manual tests. Not all test tasks can be readily automated, particularly those requiring comprehensive domain understanding.

- The failure to attain the anticipated objectives. Organizations are tented in a fraction of a moment by running tests, but they fail to attain sustainable or true advantages.

- Changes in software product technology and developments lead to difficulty in keeping automated testing.

- Test automation needs time to ripen. Creation of facilities and automation testing takes time and therefore automation maturity (and associated advantages) is time-consuming.

- In order to save the maximum possible costs (e.g., expense on improductive testing operations), organizations have impracticable expectations with regard to AST.

- An suitable approach (e.g. what level of testing is difficult to decide for what purpose) leads inappropriate strategies that fail to make use of AST’s advantages

- Failure of qualified individuals. Many abilities are required to automate trials (for example, experimental instruments, general software, domain and system skills).

Figure 1: Benefits of Automated Testing

Figure 2: Limitations of Automated Testing

4. RESEARCH SURVEY

The research was conducted to determine whether the benefits and restrictions are applicable for the software industry in particular. The internet research was spread by AST, Yahoo Groups, Google Groups and LinkedIn. The survey has also been e-mailed to corporate contacts. There are a total of 115 valid responses. The results show that all sectors, most of which cover internet, finance and healthcare, are covered. The system type demonstrates in Table 3.

|

System type |

Total Answers |

% |

|

Web |

56 |

26.31 |

|

Finance |

37 |

17.29 |

|

Healthare |

26 |

12.15 |

|

Mobile |

19 |

8.88 |

|

Other |

19 |

8.88 |

|

Telecommunication |

18 |

8.41 |

|

ERP |

17 |

7.94 |

|

Embedded system |

11 |

5.14 |

|

Games/Entertainment |

8 |

3.74 |

|

Others |

3 |

1.40 |

5. RESULTS

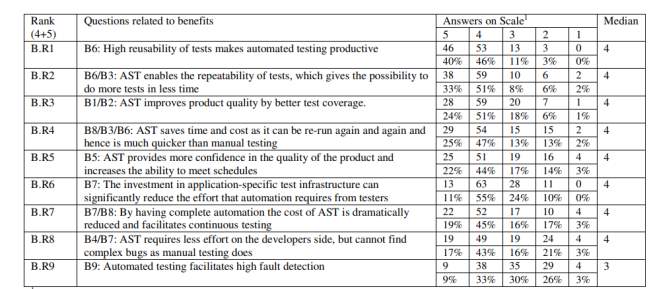

In Table 4, the advantages of AST are shown as they were in the survey and the advantages identified in the systemic literature review are referred to to illustrate the benefits of the survey.

Table 4: Survey Results for Benefits

5=completely agree, 4=agree, 3=neutral, 2=disagree, 1=completely disagree

- B.R1: Overall, 86% of participants agree entirely that the test instances are highly reusable.

- B.R2: A total of 84% agree on this advantage. However, this should not be tested. It was stressed.

- B.R3: The beneficial relationship between automation, which results in improved test coverage, is 75% agreed.

- B.R4: This issue concerns re-run tests and saving time and expense, which 72 percent of participants agree with when comparing the manual test.

- B.R5: 66% of the participants agreed that AST should be associated with greater confidence in product quality and the capacity to meet schedules.

- B.R6: Total 66% agreed, but the practitioners gave no particular clarifications.

- B.R7: The total automation lowers the test cost and facilitates continuous testing at the same time, 64 percent of respondents agree.

- B.R8: Total 60 percent agree to the declaration. At the same moment, the participants disagree with a significant proportion (24 percent).

- B.R9: Just a few (33 percent) agree that automated testing makes elevated error detection possible.

Table 5 show The restrictions are described in the same way as the advantages.

Table 5: Survey Results For Limitations

5=completely agree, 4=agree, 3=neutral, 2=disagree, 1=completely disagree

- L.R1: 89 percent of participants recognize the limit..

- L.R2: 88 percent of participants are very supportive of this declaration.

- L.R3: 81 percent agree on these challenges when it comes to effective automation and the necessary abilities.

- L.R4: 77 percent agree that AST involves elevated investment in the purchase of instruments and in training employees for instruments compared with manual testing.

- L.R5: This statement is also given here because it raises the disadvantage that complex defects cannot be found.

- L.R6: 45% agree that market-based testing instruments are inconsistent.

- L.R7: The response to this issue demonstrates obviously that the test testers (only 6 percent of participants) do not think in complete automation and that therefore remains an obstacle.

Table 6: Overall Satisfaction with AST

6. CONCLUSION

Automated testing enables quicker iteration, better test outcomes and, eventually, greater quality products. It can be time consuming and expensive to invest in the original configuration for automated testing. Automated instruments are increasingly popular and easily accessible. Automated testing includes more time for planning and growth than manual testing.

Two contributions are made to this study. Firstly, a systemic examination of the advantages and constraints in scholarly literature in software tests automation. Secondly, perform a practitioner study of the advantages and constraints of software test automation.

7. REFERENCES

- Al Dallal, J., 2009, March. Automation of object-oriented framework application testing. In 2009 5th IEEE GCC Conference & Exhibition (pp. 1-5). IEEE.

- Alshraideh, M., 2008. A complete automation of unit testing for javascript programs. Journal of Computer Science, 4(12), p.1012.

- Bashir, M.F. and Banuri, S.H.K., 2008, October. Automated model based software test data generation system. In 2008 4th International Conference on Emerging Technologies (pp. 275-279). IEEE.

- Berner, S., Weber, R. and Keller, R.K., 2005, May. Observations and lessons learned from automated testing. In Proceedings of the 27th international conference on Software engineering (pp. 571-579). ACM.

- Berry, K.J. and Mielke Jr, P.W., 1988. A generalization of Cohen’s kappa agreement measure to interval measurement and multiple raters. Educational and Psychological Measurement, 48(4), pp.921-933.

- Bertolino, A., 2007, May. Software testing research: Achievements, challenges, dreams. In 2007 Future of Software Engineering (pp. 85-103). IEEE Computer Society.

- Burnim, J. and Sen, K., 2008, September. Heuristics for scalable dynamic test generation. In Proceedings of the 2008 23rd IEEE/ACM international conference on automated software engineering (pp. 443-446). IEEE Computer Society.

- Choi, K.C. and Lee, G.H., 2006, May. Automatic test approach of web application for security (autoinspect). In International Conference on Computational Science and Its Applications (pp. 659-668). Springer, Berlin, Heidelberg.

- du Bousquet, L. and Zuanon, N., 1999, October. An overview of Lutess a specification-based tool for testing synchronous software. In 14th IEEE International Conference on Automated Software Engineering (pp. 208-215). IEEE.

- Fecko, M.A. and Lott, C.M., 2002. Lessons learned from automating tests for an operations support system. Software: Practice and Experience, 32(15), pp.1485-1506.

- Fewster, M. and Graham, D., 1999. Software test automation: effective use of test execution tools. ACM Press/Addison-Wesley Publishing Co..

- Hao, D., Zhang, L., Liu, M.H., Li, H. and Sun, J.S., 2009. Test-data generation guided by static defect detection. Journal of Computer Science and Technology, 24(2), pp.284-293.

- Haugset, B. and Hanssen, G.K., 2008, August. Automated acceptance testing: A literature review and an industrial case study. In Agile 2008 Conference (pp. 27-38). IEEE.

- Kansomkeat, S. and Rivepiboon, W., 2003, September. Automated-generating test case using UML statechart diagrams. In Proceedings of the 2003 annual research conference of the South African institute of computer scientists and information technologists on Enablement through technology (pp. 296-300). South African Institute for Computer Scientists and Information Technologists.

- Karhu, K., Repo, T., Taipale, O. and Smolander, K., 2009, April. Empirical observations on software testing automation. In 2009 International Conference on Software Testing Verification and Validation (pp. 201-209). IEEE.

- Kitchenham, B. and Charters, S., 2007. Guidelines for performing systematic literature reviews in software engineering.

- Leitner, A., Ciupa, I., Meyer, B. and Howard, M., 2007, January. Reconciling manual and automated testing: The autotest experience. In 2007 40th Annual Hawaii International Conference on System Sciences (HICSS’07) (pp. 261a-261a). IEEE.

- Liu, C., 2000, June. Platform-independent and tool-neutral test descriptions for automated software testing. In Proceedings of the 22nd international conference on Software engineering (pp. 713-715). ACM.

- Malekzadeh, M. and Ainon, R.N., 2010, February. An automatic test case generator for testing safety-critical software systems. In 2010 The 2nd International Conference on Computer and Automation Engineering (ICCAE) (Vol. 1, pp. 163-167). IEEE.

- Perry, W. and Rice, R., 2013. Surviving the top ten challenges of software testing: a people-oriented approach. Addison-Wesley.

- Persson, C. and Yilmazturk, N., 2004, September. Establishment of automated regression testing at ABB: industrial experience report on’avoiding the pitfalls’. In Proceedings. 19th International Conference on Automated Software Engineering, 2004. (pp. 112-121). IEEE.

- Pettichord, B., 1999. Seven steps to test automation success. Star West, November.

- Pocatilu, P., 2002. Automated software testing process. Economy Informatics, 1, pp.97-99.

- Saglietti, F. and Pinte, F., 2010, September. Automated unit and integration testing for component-based software systems. In Proceedings of the International Workshop on Security and Dependability for Resource Constrained Embedded Systems (p. 5). ACM.

- Shan, L. and Zhu, H., 2009. Generating structurally complex test cases by data mutation: A case study of testing an automated modelling tool. The Computer Journal, 52(5), pp.571-588.

- Tan, R.P. and Edwards, S., 2008, April. Evaluating automated unit testing in sulu. In 2008 1st International Conference on Software Testing, Verification, and Validation (pp. 62-71). IEEE.

- Wissink, T. and Amaro, C., 2006, September. Successful test automation for software maintenance. In 2006 22nd IEEE International Conference on Software Maintenance (pp. 265-266). IEEE.

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allDMCA / Removal Request

If you are the original writer of this essay and no longer wish to have your work published on UKEssays.com then please click the following link to email our support team:

Request essay removal